From Disney…

Recent subjective studies showed that current tone mapping operators either produce disturbing temporal artifacts, or are limited in their local contrast reproduction capability.

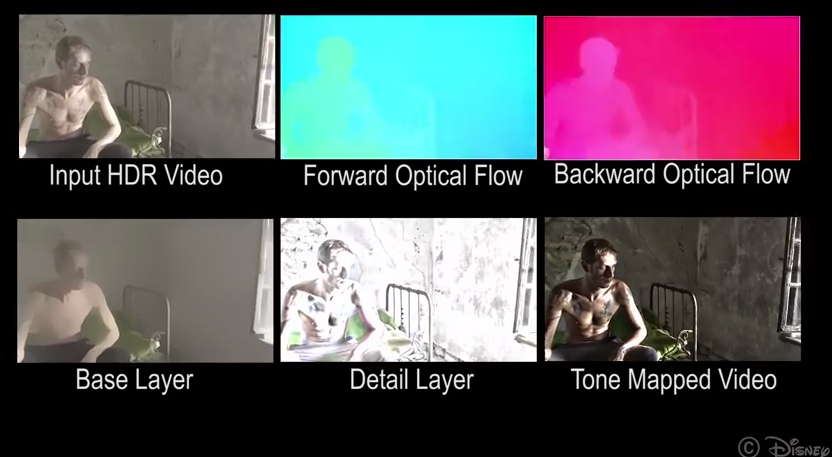

We address both of these issues and present an HDR video tone mapping operator that can greatly reduce the input dynamic range, while at the same time preserving scene details without causing significant visual artifacts. To achieve this, we revisit the commonly used spatial base-detail layer decomposition and extend it to the temporal domain. We achieve high quality spatiotemporal edge-aware filtering efficiently by using a mathematically justified iterative approach that approximates a global solution.

Comparison with the state-of-the-art, both qualitatively, and quantitatively through a controlled subjective experiment, clearly shows our method’s advantages over previous work. We present local tone mapping results on challenging high resolution scenes with complex motion and varying illumination.

We also demonstrate our method’s capability of preserving scene details at user adjustable scales, and its advantages for low light video sequences with significant camera noise.